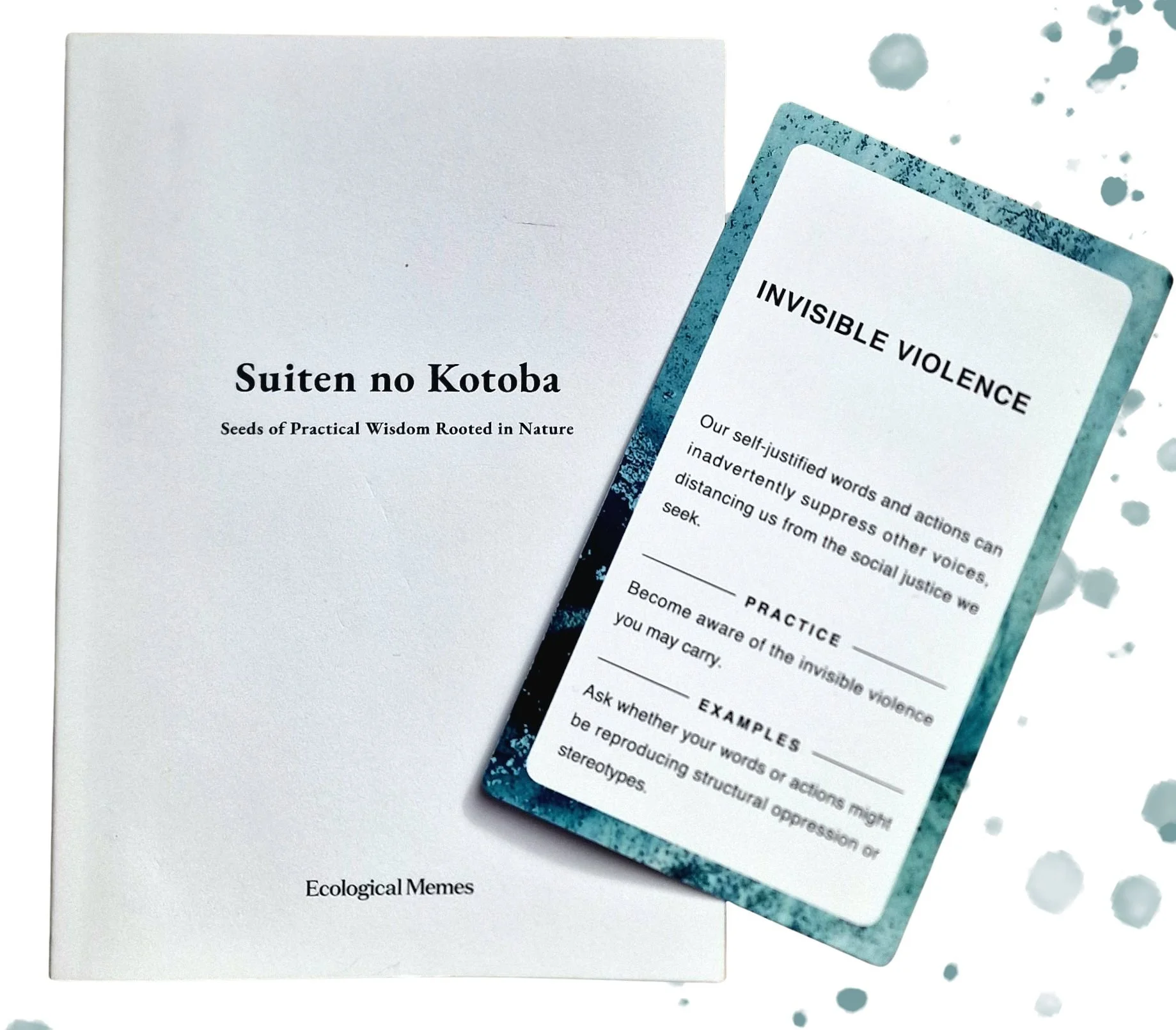

In October, 2025, I had the opportunity to participate in a workshop led by the Japanese organisation, Ecological Memes. The workshop centred around a card deck, called Suiten no Kotoba, which offers 27 ‘Seeds of Practical Wisdom Rooted in Nature,’ that have emerged through Ecological Memes’ process of exploration. The accompanying booklet explains that, “Suiten” is a Buddhist word coined by Japanese polymath Minakata Kumagusu. It means a place where a myriad of truths and bonds gather and co-arise dependently. It is this card deck and in particular the ‘invisible violence’ card that inspired me to host a Bohm Dialogue on Human, More-than-Human and Artificial Intelligences, which took place on Saturday 21st February.

The Suiten no Kotoba card deck, created by Ecological Memes

I am grateful for Newspeak House for generously hosting this dialogue, and for the Newspeak fellows and curious participants that attended. Whilst participation in Bohm dialogues do not require ‘expertise,’ it was certainly valuable to have those working directly with AI contribute to the dialogue. Similarly, those experts shared, during the dialogue, the value of hearing other perspectives outside of their usual work. ‘Siloed knowledge’ is often cited as a barrier to innovation and change, and Bohm Dialogue allows us to dissolve the boundaries of knowledge and experience, in order for a group to think together and harness collective human intelligence.

The dialogue set-up - a circle of chairs to ensure inclusion and non-hierarchy, and a centrepiece to remind participants that in dialogue we talk to the centre (not directly to individuals).

For those not familiar with the Bohm dialogue process there are a few aspects to note:

Bohm worked with a ‘no agenda’ approach to dialogue, in that there was never a specified topic or theme, other than the intention to inquire into the nature of human thought and consciousness. In my work with Bohm Dialogue, I have found that it is possible to hold a container of more focused inquiry, whilst still adhering to the principles of the dialogue requiring no outcome or group agreement. To support this, I incorporate ‘framing exercises’ individually, in pairs and small groups. These simple creative exercises are designed to cultivate curiosity and stimulate deeper thinking on the broad themes of the inquiry that can be explored in more depth during the dialogue.

Whilst groups benefit from a facilitator to introduce and hold the process, over time, a regular and committed group would not require a facilitator. A key aspect of the dialogue practice is co-responsibility for the group, and the ability for each person to feel enabled to speak freely and authentically, as well as listen deeply, and respectfully to others. In essence, it is the most natural human activity; to gather in a circle and listen, share meaning, and think together. It is perhaps a sign of the fragmentary nature of our communication and relationships that Bohm was so concerned with, that this practice feels uncomfortable, confronting, or of little value to many people.

Participants of Bohm Dialogue often reflect that the experience of the process itself is as valuable as what is shared and emerges during it. This is a different kind of interaction with others than our everyday communication. It invites the intention to slow down, listen deeply, suspend judgement and inquire into the underlying assumptions and biases that form much of our thought. The Bohm dialogue philosophy makes clear that each participant is of equal value, both in their voicing and listening roles. However, what we are ‘hearing’ collectively as a group is subject to the interpretation of each individual. At the end of each dialogue, whilst we have all heard the same words, it is clear that we do not always share the same meaning.

I speak to these aspects of Bohm Dialogue to outline that the reflections I share below are those that stayed with me after the dialogue, that I had my own starting place which included the design of framing exercises, and that my memory, or interpretation of what was shared may differ from others that attended. As is the nature of Bohm Dialogue, some of the points shared below were comments from one person, some were threads that we returned to and explored together in more depth. I have done my best to group them into themes, and therefore, they are not in the chronological order that they emerged in the dialogue.

What do we consider to be intelligence

One participant questioned why we call AI ‘intelligence’? And this raises an important question of whether we have collectively agreed or understood as humans what intelligence is? Bohm described it as, “the deep source of intelligence is the unknown and indefinable totality from which all perception originates,” implying that on one level intelligence could be considered outside of our physical bodies, yet within the fabric of the whole.

Another participant drew our attention to the fact that AI is only programmed with what is written online or shared with it. There is a lot of knowledge and wisdom that is not written i.e. Indigenous and community knowledge. This is missing ‘intelligence,’ and speaks to the exclusion of such knowledge and practice from much of science and academia. Not only do we tend to be human-centric, we also tend to be Western, scientific and academic centric in terms of what is considered ‘intelligence.’

The group also acknowledged that research has shown the bias being programmed into AI, further perpetuating harmful thinking and behaviours such as racism, sexism and discrimination.

What does it mean to be human?

We spoke multiple times about the anthropomorphisation of AI, and that doing so has led some people to believe that it has emotions and genuinely cares about them. As stated by one of the AI developers in the group, who expressed great concern about this, AI is simply a predictive learning model, it is an inert mass of numbers and data until prompted. The one-sided aspects of AI relationships were also noted; it speaks words of encouragement, which at first may seem positive, but human relationships are messy and complicated. All feelings are an expression of life, and we need to be able to navigate and overcome ‘difficult’ emotions and experiences, in order to build emotional intelligence and resilience. If AI removes any need to face our difficult emotions, what will be the impact on our relationships?

It is easy to see why many people connect easily to AI. It could be seen as a natural extension of being made to feel like machines - to show up productive, consistent, efficient, ‘positive’ to have their experience of time reduced into production units.

Another perspective on the anthropomorphisation of AI is that human conceptual thought is deeply metaphorical in nature, so if we don’t make AI in ‘our image’ i.e. anthropomorphise it, then what is our point of reference, is this just human nature? As we reject our more emotional nature, are we moving further towards a human-machine identity?

One interesting observation on how humans are different from AI, is that AI cannot hold contradiction, that perhaps this is what makes us uniquely human.

Towards the end of the dialogue, some participants raised the question of whether AI would have been able to do what we did together in dialogue. It seemed that the general feeling was no, it could not have followed the flow of meaning, and generated the thinking and insight that emerged. Dialogue is something creative, and just like intelligence, creativity is something that may benefit from further co-inquiry into its true nature.

Ecological and social impacts and implications of AI

Towards the end of the dialogue one participant stated that, “to look at the pros and cons of AI, we need to put the ecological issue to one side.” There was a clear, if uneven divide in the group, between those of us, myself included, that expressed anger towards the lack of concern or consideration of the ecological impacts of using AI, and those that were neutral or indifferent to this.

One participant shared that the climate crisis is real and present for many people and communities around the world. Communities who are experiencing drought and water scarcity, and noise, light and air pollution due to the data centres required to sustain AI, as well as the people working in low paid, poor, often life threatening conditions for the mining of rare earth minerals required for our endless addiction to the latest technological device. There is extensive and ongoing environmental and social injustice woven into the advancements of technology, that perhaps some people see as a ‘price to pay’ and I for one, can’t help feeling enraged by this. I appreciate that the multiple crises we currently face as humanity are simply too much for individuals to cognitively and emotionally manage, and that one coping mechanism is to turn away from the discomfort that arises when being asked to think and feel about these issues. It seems somewhat ironic that it is our phones that provide a window into 24 hour live stream coverage of the suffering of the most vulnerable humans and nature, and yet most of us feel powerless to do anything about it.

People say technology is the answer, but what is the question?

‘Just because we can, doesn’t mean we should’ was a sentiment shared by one person in relation to the advancement of technology. What is it for, and what is it rooted in - greed and speed. Technology companies and early adopters seem to be in a rush, but where are we trying to get to?

AI is just another layer of all the technology we have created before it, when we look at that history, it isn’t surprising that it is having a negative impact.

Many people resign themselves to believing that advances in technology are inevitable, instead of asking, ‘do we really need it?’ Is technology really making us happy and how do we measure progress? What would be the priorities for progress if more countries introduced wellbeing indicators to replace GDP, such as the gross national happiness index in Bhutan.

One person posed a more sinister side to AI: we are willingly giving AI a lot of personal information; should we be? Are we setting ourselves up for manipulation by large tech companies?

A hopeful future?

The more hopeful or pro-AI voices in the group posed philosophical questions such as; will AI be the ‘mirror’ to reflect back to us the flaws of humanity so we can save ourselves?

Another reflection was that everything that has been created on earth - human, more-than-human, and machine, has come from Earth - reminding us that there is some comfort to be had when we take a deep time perspective on the evolution and existence of life on earth.

We explored the wide uses of AI, noting that there are of course positive uses of AI e.g. medical research. However, this feels in sharp contrast to the more ‘social media’ use of AI that encourages people to jump on purposeless trends, with no consideration of resources used. How can we encourage responsible use and discernment?

If we can recognise collectively that AI is just a tool, then will we have more control over its use. However, this change in discernable use will only come about if more people are given the time to participate in spaces for reflection and thinking together, a practice that is uncommon in our fast-paced, technologically driven society.

A creative lunchtime activity to reconnect participants to one inherent aspect of being human.

For my final reflection I return to the Suiten no Kotoba card of Invisible Violence and the Ecological Memes workshop. I picked this card in response to a prompt to choose the card I felt least drawn to, or even repelled by. We were then invited to discuss our cards in small groups. Talking about this card created a surge of energy and emotion that was not present when I was reflecting on cards I felt more resonant with. A valuable insight into the energy or wisdom we might be suppressing by turning away from the things that make us feel uncomfortable, or that we don’t identify strongly with. AI makes me feel uncomfortable for many reasons, and I am grateful that instead of holding that discomfort alone, I was able to bring together a group of humans to co-inquire into AI, to hear perspectives both similar and different to my own, and in some small way, create meaning together.

“The basic idea of this dialogue is to be able to talk while suspending your opinions, holding them in front of you, while neither suppressing them nor insisting upon them. Not trying to convince, but simply to understand. The first thing is that we must perceive all the meanings of everybody together, without having to make any decisions or saying who’s right and who’s wrong. It is more important that we all see the same thing. That will create a new frame of mind in which there is a common consciousness. It is a kind of implicate order, where each one enfolds the whole consciousness. With the common consciousness we then have something new-a new kind of intelligence.”